Machine Learning Strategist Lauren Perry Shares Trusted AI Framework with Engineering Students, Faculty Aerospace Corps Senior Project Engineer Discusses Historical AI Failures and How to Prevent Them

November 30, 2022

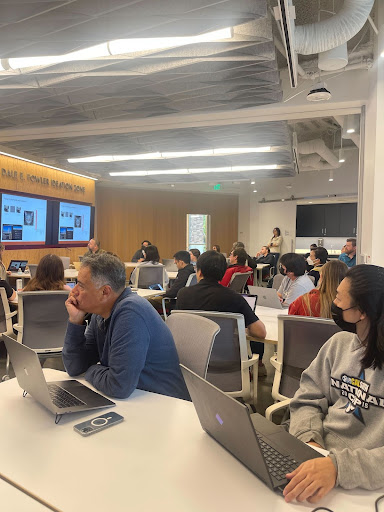

Recently, Artificial Intelligence (AI) and Machine Learning Strategist Lauren Perry educated the Fowler School of Engineering (FSE) community on noteworthy failures among AI algorithms. Perry spoke before a packed crowd in the Ideation Zone, outlining what went wrong in these examples and how to introduce frameworks designed to prevent history from repeating itself.

Recently, Artificial Intelligence (AI) and Machine Learning Strategist Lauren Perry educated the Fowler School of Engineering (FSE) community on noteworthy failures among AI algorithms. Perry spoke before a packed crowd in the Ideation Zone, outlining what went wrong in these examples and how to introduce frameworks designed to prevent history from repeating itself.

Perry began her talk by asking students and faculty to share AI failures they had heard of, from unreliable facial recognition software to vehicles crashing while on autopilot. The historic road to developing AI is lined with failures like these; Perry approached this understanding with urgency, expressing the importance of recognizing what caused these past mistakes.

“Algorithms don’t always work, and when they don’t, it can be funny–such as creating art that depicts cooked salmon in a river–all the way to actual disastrous consequences,” Perry stated.

Throughout the conversation, Perry highlighted mistakes to look out for when developing AI. Her list ranged from small-scale failures–such as when misunderstood data input caused a sports camera to mistake a referee’s bald head for a soccer ball–to dangerous or even fatal errors, the most devastating of which involved plane crashes resulting from an uncontrollable algorithm that pilots could not override. Understanding how these real-world failures occur in the first place, Perry explained, is the preliminary step in algorithm development.

“There are real-life things that are happening in your data, not just purposeful, adversarial inputs that can drastically affect your response, so you want to test and make sure that you’re making the right decisions about your data so that these things can’t happen,” Perry said.

How, then, can engineers actively prevent these errors from occurring in new algorithms? Dr. Erik Linstead, Senior Associate Dean of FSE, invited Perry to help address this core concern, not only among students and faculty, but also everyday people who may one day use this technology.

“A natural question everyone is interested in is ‘how do we know we can trust AI, how do we know that something is safe to do?’ Finding someone who’s working with these ideas like her is important,” said Linstead. “Her ability to tie in the concepts to real-world failures made it a really useful talk.”

Perry’s work as a Senior Project Engineer at The Aerospace Corporation focuses around the formation and application of trusted AI frameworks. These are designed to help engineers pinpoint errors long before a new system makes its debut, especially when protecting the safety and investments of others is a top priority.

During the talk, Perry shared her framework with students and faculty. Its structure highlights four core foci: defining needs to gauge whether AI is the most appropriate solution, specifying objectives in data, assessing against trust attributes, and deploying and maintaining trust in operations. In applying this concise framework, engineers like Perry can gain the confidence that their technology will perform tasks correctly with good intentions.

This understanding is vital within Perry’s field of space domain awareness.

“We bring a lot of AI and machine learning into the space industry as part of my job at Aerospace,” Perry shared. “You’re putting AI and machine learning on these multimillion-dollar spacecraft or multibillion-dollar programs. Instead of just applying an algorithm, it’s about understanding the different facets of what the algorithm does in order to actually trust it.”

Linstead cited Perry’s tangible experience outside of academia as a valuable asset to the conversation. “She is actually applying these concepts in-industry in really interesting use cases for big projects. It’s fun especially for the students to see that real-world connection,” Linstead explained.

Perry’s talk concluded with a note of encouragement, reminding FSE students and faculty of the purpose behind her words: to instill in them the understanding required to design meaningful, effective, and trustworthy AI. “I hope that when you are creating algorithms going forward, you’re doing more than just looking at your accuracy metrics,” Perry said. “That way, your algorithms can actually make it into operations and make a difference out there.”